Latest News

December 22, 2017 | Antivirus for Windows

Endurance Test: Protection of Corporate Solutions in Focus

When selecting a security solution for corporate users, the key criterion is always its protection. The lab at AV-TEST examined 14 corporate solutions in a 4-month endurance test.

To be sure, protection provided by a corporate security suite is but one of the three areas examined in the test lab of AV-TEST. However, it is the core function most closely scrutinized. The testing time and effort required in the lab is indeed very high, but it delivers reliable results.

14 solutions – over 20,000 attacks

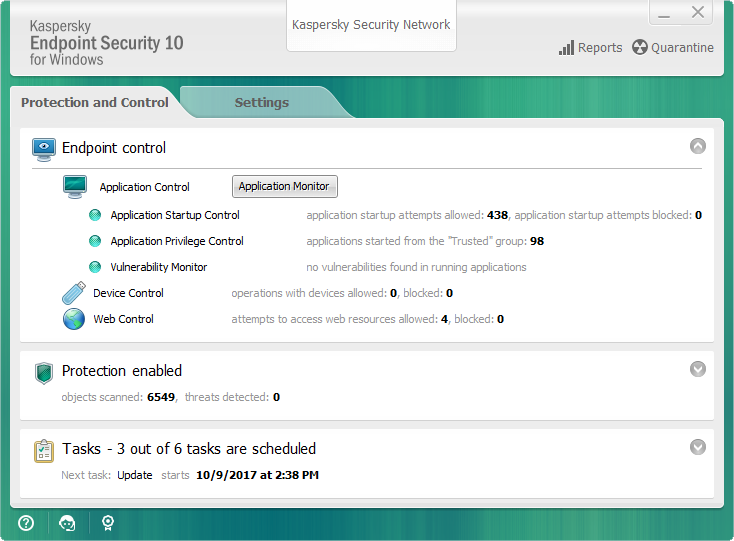

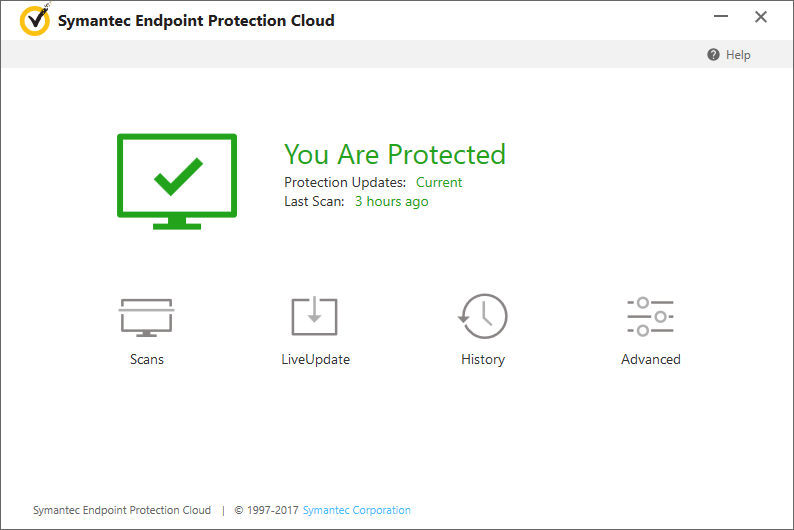

The test included the corporate security suites from Avast, Bitdefender, F-Secure, G Data, Kaspersky Lab (with Endpoint and Small Office Security), McAfee, Microsoft, Palo Alto Networks, Seqrite, Sophos, Symantec (with Endpoint Protection and Endpoint Protection Cloud) and Trend Micro. The test period lasted from July to October 2017. Every product was examined each month in a complete test routine with the real-world test and the AV-TEST reference set. The sets contained a total of 400 still new, unknown malware threats and 20,590 samples of already known malware.

The table indicates in a final tabulation of how well the individual products perform in terms of protection. For over four months, the solutions from Bitdefender, as well as Kaspersky Lab with both product versions, and Symantec with both versions, identified and eliminated all malware – whether new or known; 20,990 samples in all.

Detection rates in the endurance test

Throughout the entire test period, the products of Bitdefender, F-Secure, Kaspersky Lab and Symantec detected all the malware threats.

Endurance test protection

In order to make it easier to compare the test results, the lab awards up to six points for protection.

Bitdefender Endpoint Security

The security solution for corporate users demonstrated 100% detection in the endurance test.

Nearly 21,000 test cases per product

A vast amount of time and effort go into testing in the lab. After all, each product is required to undergo the real-world test and the reference test multiple times.

Real-world test: The term "real-world" refers to the malware samples used in the test. On the day of the test, they are collected, sorted and then partially deployed. For this, AV-TEST uses its own honeypots, e.g. unprotected PCs that are attacked while surfing the Web. The team registers the visited and infected websites in a special database and evaluates the type of attack. Likewise, the testers use email honeypots, i.e. multiple mail accounts containing messages with malicious files, links to files or links to websites with malware. Each discovered malware sample is analyzed, i.e. precisely documented in terms of what it does to a system. The resulting action list of a malware sample is used in the test to validate whether the security solution really blocks all actions.

All discovered malware samples are required to be new and not already registered in the databases. If they are already on file, but not more than two weeks old, then they are placed onto the list of possible candidates for the second test phase with the reference set.

The actual real-world test proceeds as follows: The test product is installed on a Windows test PC (AV-TEST uses many totally identical PCs). For consumer software, the lab uses the default configuration of the product. For business products, the lab uses the configuration recommended by the manufacturer. The products can be updated at any time via online update and use their cloud services. A solution can thus rely on help from the cloud if the local detection techniques are exhausted. This mirrors a normal real-life scenario. Afterwards, the security solution is tested with the defined group of zero-day malware. In doing so, records are kept on how the security solution responds.

AV-TEST reference set: For the special set, the experts collect the malware samples according to the same system as with the real-world test. However, the malware samples, usually numbering more than 5,000, are already up to two weeks old and therefore already quite well known. The purpose of the exercise is to evaluate whether the products can perhaps later detect threats, i.e. with a slight delay, that first went undetected.

The reference set naturally does not consist only of one special group, such as Trojans. The lab ensures a good mixed variety with backdoors, bots, viruses, Trojans, worms, downloaders, droppers or password stealers. But the notoriety of a malware threat also plays a role: only if it is also relevant and widely distributed (confirmed by at least two independent sources) is it potentially included in the set. The lab enlists the help of telemetry data and information from companies, research institutions and manufacturers of security software to evaluate whether a malware sample is widely distributed.

In this test as well, the security solution is reinstalled on a PC and activated. Afterwards, the solution scans and detects the attackers (on-demand scan). Any malware not detected in the process is then launched individually afterwards in order to test the on-execution protection. If a product works without static protection, i.e. only with behavior-based detection, then naturally all files are launched in the test. As the malicious files are known for up to two weeks, the products ought to detect them practically without exception. And many products are capable of doing so, but not all. In this test phase as well, each security product always has access to its extended security techniques in the cloud.

Success means successfully blocking malware

In the tests, all individual results per product are registered and evaluated. Only the following scenarios, however, are considered "successful detection":

- Access to the URL is blocked

- An exploit on the website is detected and blocked

- A download of malicious components is blocked

- The launching of malicious components is blocked

In the test, merely detecting or warning against a malicious file or link is not considered a sufficiently successful defense. The access or execution must be effectively blocked.

Important to know: All malware samples, infected websites or emails used in the test were collected by the lab. The laboratory does not rely on material from other sources, i.e. from manufacturers or from other public databases. In order for the test to be the same for all products, the test steps run simultaneously for all products on a series of identical Windows PCs.

The results in detail

In the current endurance test from July to October 2017, the five solutions from Kaspersky and Symantec with two versions each and Bitdefender demonstrated the best performance. In all test phases, they recognized a total of 400 attackers in the real-world test and the 20,590 malware samples from the reference set.

The products from McAfee and Trend Micro each failed to detect only one individual attacker in the real-world test. The solution from F-Secure also had minor detection difficulties with five attackers. In terms of their protection, all the products mentioned achieved the maximum score of six points – the laboratory awards points in order to make it easier to compare and assess the performance of the security products.

The following solutions from G Data and Seqrite had problems detecting 21 and 22 malware threats respectively, of which already 4 of the attackers each were 0-Day malware.

The free protection module from Microsoft, the System Center Endpoint Protection, failed to detect 51 attackers. For Sophos and Avast, the number of undetected malware samples already reached 107 and 190. The product from Palo Alto Networks was unable to block 287 attacks.

AV-TEST – Storehouse institute for 700 million viruses of all kinds

Maik Morgenstern, CTO AV-TEST GmbH

In the AV-TEST databases, over 700 million malware samples have already been registered and catalogued.

The independent testing of antivirus products would never function objectively if the institute only received the malware samples from the manufacturers. That is why over the past 15 years, AV-TEST has been collecting malware samples on the web, analyzing and grouping them in categories. To do so, AV-TEST uses honeypot systems, among others. These contain vulnerable versions of Windows and other standard applications. The objective is to allow them to be infected by malware and then to analyze them in real time. These PCs accept everything that can be found on the Internet: such as infected websites or links to malicious files. In addition, AV-TEST uses many email accounts intended to collect as much spam as possible. These incoming spam and phishing emails have malware in tow or try to send the user via a link to a malicious website.

The lab also uses various search engines and social media services such as Twitter as additional sources to ferret out malware: every day, the experts search with the help of popular keywords in various search engines. The findings are then analyzed and links to malicious websites are logged. In addition, on behalf of users, the lab also checks interesting Twitter feeds containing links. They are tracked and the target address is evaluated. In this manner, the lab examines each day over 400,000 hits from search engines and over 1 million Twitter feeds.

The number of discovered malware samples per month is rapidly increasing: in 2007, it was still roughly 500,000, however in 2017, roughly 10 million samples per month were added. That is a growth rate by a factor of 20. In total, there are more than 700 million files. AV-TEST has not only registered all these malware versions but also saved them as samples. After all, some of the very latest attackers end up in the set for the real-world test, whereas a select group of the samples up to a maximum of two weeks old are used in the test as a reference set.